A Visibility Intelligence breakdown of how industrial variance tracking proved that measured consistency creates predictable outcomes—and why Betweener Engineering™ makes visibility reliable through signal variance control.

Click to Expand

1. Audio

2. Definition

3. Video

8. Framework

9. Action Steps

10. FAQs

11. Call to Action

12. Free Training

13. Signature

Definition

Visibility Variance Control is the systematic measurement and reduction of inconsistency across entity signals—including entity type declarations, category labels, canonical definitions, and framework references—to maintain signal alignment within acceptable tolerance ranges. It enables predictable AI classification outcomes by treating visibility as a controlled process where measured consistency produces reliable citation, recall, and recommendation results rather than random or unpredictable visibility performance.

Analogy Quote — Curtiss Witt

“Visibility without variance measurement is like manufacturing without quality control—unpredictable and unreliable.”

— Curtiss Witt

Historical Story

Hawthorne Works, Illinois, 1924. Walter Shewhart faced a crisis that was threatening Western Electric’s manufacturing reputation.

The factory produced telephone equipment. Workers were skilled. Machinery was modern. Processes were documented. But quality was unpredictable. Some batches were perfect. Others had defect rates of 30%. Customers couldn’t rely on Western Electric because reliability was random.

Management’s solution was inspection—check every part, reject defects, ship only perfect products. But inspection didn’t solve the problem. It caught defects after they occurred. It didn’t prevent them. And it couldn’t explain why some batches were flawless while others were failures.

Shewhart, a statistician and engineer, studied the problem differently. He didn’t ask “Are parts defective?” He asked “Why does quality vary?“

He began measuring. Not just pass/fail, but actual dimensions. Part thickness. Component weight. Assembly tolerance. He plotted measurements on charts over time. And he discovered something revolutionary: variance followed patterns.

Parts didn’t randomly succeed or fail. They varied within predictable ranges—until something changed. A worn tool. An environmental shift. A process adjustment. When variance exceeded normal ranges, defects followed. When variance stayed within control limits, quality remained consistent.

Shewhart created the control chart: a graph showing measurements over time with upper and lower control limits. As long as measurements stayed within limits, the process was stable. When measurements crossed limits, something had changed—investigate immediately before defects accumulate.

The impact was immediate. Defect rates dropped 50% within months. But more importantly, reliability became predictable. Western Electric could guarantee quality because they measured variance continuously and corrected deviations before they produced failures.

The innovation wasn’t perfection—it was measured consistency. Shewhart proved that controlling variance creates reliability. Ignoring variance creates randomness.

Our Connection

Quality control charts didn’t eliminate variation—they created variance measurement systems that enabled predictable, reliable outcomes through continuous monitoring and correction.

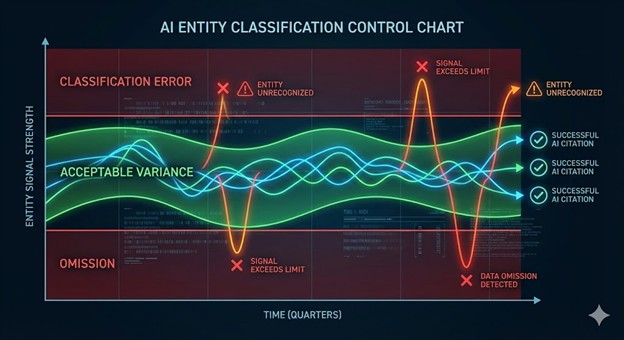

AI systems interact with business identity the same way manufacturing processes interact with specifications. Your visibility isn’t perfectly consistent—some platforms have slightly different language, some bios vary in emphasis, some schema has minor inconsistencies. The question isn’t whether variance exists. It’s whether you measure and control it.

When AI encounters your business across multiple contexts, it experiences variance in your signals. Your website says “marketing agency.” LinkedIn says “marketing services firm.” Google says “digital marketing consultant.” These variations create classification variance—AI must decide which signal is authoritative, whether they represent the same entity, and what category you belong to.

If variance is unmeasured and uncontrolled, visibility outcomes are random. Sometimes AI cites you correctly. Sometimes it misclassifies you. Sometimes it fragments you into multiple entities. Reliability is impossible without variance control.

This is the core logic of Betweener Engineering™—a new discipline created by The Black Friday Agency to engineer identities with measured signal variance that stays within acceptable tolerances, creating predictable visibility outcomes. Quality control charts revealed what modern visibility demands: measuring variance creates reliability. Ignoring variance creates randomness.

Modern Explanation

Most businesses don’t measure visibility variance. They update platforms when convenient. They let team members write bios independently. They assume “close enough” is fine. They hope AI figures it out.

AI doesn’t figure things out. It processes variance—and uncontrolled variance produces unreliable classification.

When AI classifies your business, it encounters signal variance across platforms, content, and time. Your category label varies slightly. Your definition emphasizes different aspects. Your entity type declarations use different schema properties. This variance determines whether AI classification is consistent (reliable visibility) or inconsistent (random outcomes).

This is why Betweener Engineering™ treats visibility as a controlled process requiring variance measurement:

- Entity type variance (measuring deviation between schema declarations, LinkedIn categories, Google Business Profile types—establishing acceptable tolerance range)

- Category label variance (measuring word-level consistency across platforms—”marketing agency” vs “marketing firm” vs “agency” creates measurable variance)

- Definition variance (measuring textual similarity across platform descriptions—canonical definition deployed with allowable adaptation limits)

- Framework reference variance (measuring consistency in how named frameworks are mentioned—exact name vs paraphrase vs omission)

- Temporal variance (measuring signal drift over time—quarterly audits track whether variance is increasing or staying within control limits)

Shewhart’s control charts worked because they showed when processes went out of acceptable ranges. Betweener Engineering™ works because it measures when entity signals exceed acceptable variance, triggering correction before AI encounters the inconsistency.

This is how Semantic Endurance depends on variance control. AI can maintain long-term memory of entities whose signals stay within predictable ranges. When variance exceeds tolerances—major rebranding, category changes, definition updates that aren’t propagated—AI memory becomes unreliable. Controlled variance enables permanent recall. Uncontrolled variance creates memory decay.

The TBFA 8-Step Betweener OS treats variance measurement as operational infrastructure. Step 8 (AI Visibility Publishing) specifically includes variance monitoring—tracking how signals evolve across platforms and content over time, establishing control limits for acceptable deviation. You’re not trying to achieve perfect uniformity. You’re measuring variance and keeping it within ranges that produce reliable AI outcomes.

Framework: The Variance Control Model

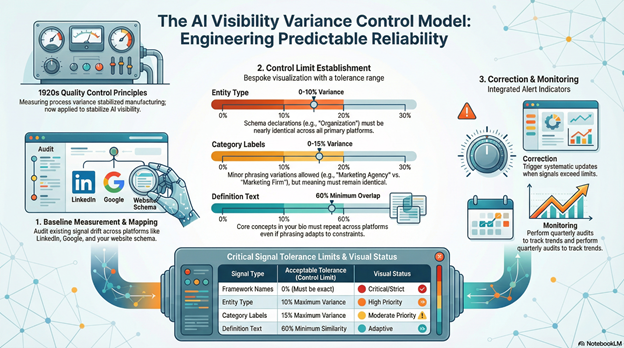

This is the structural framework for engineering predictable visibility through signal variance measurement—built into The TBFA 8-Step Betweener OS and proven through quality control chart logic.

Stage 1: Baseline Variance Measurement (Current State Mapping)

Measure existing signal variance across all platforms. For entity type: compare website schema declaration, LinkedIn company category, Google Business Profile type—calculate deviation (identical = 0% variance, similar categories = 20% variance, contradictory types = 100% variance). For category labels: compare exact phrasing across platforms—word-level consistency analysis. For definitions: measure textual similarity between website, LinkedIn, Google descriptions using overlap percentage. For framework references: count how many times named frameworks are mentioned correctly vs paraphrased vs omitted. Create variance baseline scores for each signal type. Most businesses discover 40-80% variance—signals drift significantly across platforms.

Stage 2: Control Limit Establishment (Acceptable Tolerance Definition)

Define acceptable variance ranges based on AI classification sensitivity. Entity type tolerance: 0-10% variance (must be nearly identical—”Organization” vs “ProfessionalService” is acceptable, “Organization” vs “Person” exceeds limits). Category label tolerance: 0-15% variance (minor word variations acceptable—”marketing agency” vs “marketing services” is within limits, “agency” vs “consultant” exceeds limits). Definition tolerance: 60-100% textual overlap (core concepts must match, phrasing can adapt for platform constraints). Framework reference tolerance: 100% name accuracy (framework names must be exact, never paraphrased). These control limits define when variance requires correction before AI classification is affected.

Stage 3: Variance Correction Protocol (Process Control)

When variance exceeds control limits, trigger systematic correction. Entity type variance above 10%: immediately update all platforms to match canonical schema type. Category label variance above 15%: standardize to single approved phrase across all platforms. Definition variance below 60% overlap: rewrite platform-specific descriptions to align with canonical version. Framework reference errors: correct to exact framework names. Document correction actions and retest variance after changes. Just like Shewhart’s operators corrected processes when measurements exceeded control limits, visibility teams correct platforms when signal variance exceeds tolerances. Correction prevents variance from accumulating into classification failure.

Stage 4: Continuous Variance Monitoring (Ongoing Reliability Maintenance)

Implement quarterly variance audits using Stage 1 measurement methodology. Plot variance scores over time on control charts—tracking whether variance is stable, increasing, or decreasing. Identify variance sources: platform interface changes, new team members, content updates, external mentions. When variance trends upward, investigate causes and implement preventive measures. When variance stays within control limits consistently, process is stable—visibility outcomes become predictable. Quality control required continuous monitoring because processes naturally drift. Visibility control requires the same discipline—quarterly measurement, variance tracking, limit enforcement.

Action Steps

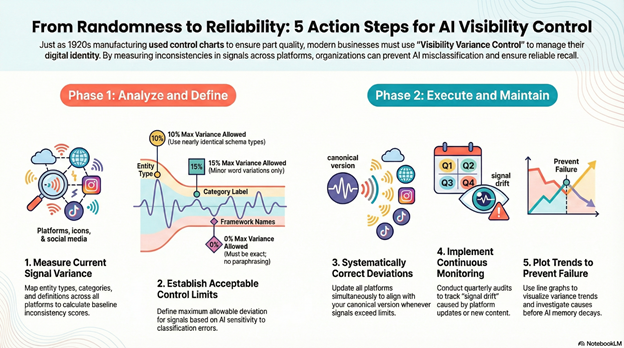

Step 1: Measure Your Current Entity Signal Variance

Create a variance measurement spreadsheet. List platforms (Website Schema, Website Text, LinkedIn Company, LinkedIn Personal, Google Business Profile) as rows. Create columns for: Entity Type Declared, Category Label Used, Definition Text, Primary Framework References. Fill every cell with exact current language. Now calculate variance: For entity type, assign 0% if all identical, 20% if similar (Organization vs ProfessionalService), 50% if different categories, 100% if contradictory. For category labels, calculate word-level consistency—”marketing agency” vs “marketing firm” = 50% variance (one word different). For definitions, measure text overlap percentage using free tools like text-compare.com. Total your variance scores. Above 30% average indicates uncontrolled variance creating unreliable visibility.

Step 2: Establish Your Control Limits Based on AI Sensitivity

Review your variance measurements and set acceptable ranges. Entity Type Control Limit: Maximum 10% variance allowed (must use same or closely related schema types). Category Label Control Limit: Maximum 15% word variance (minor phrasing variations acceptable, meaning must stay identical). Definition Control Limit: Minimum 60% text overlap required (core concepts must repeat, platform-specific framing allowed within limits). Framework Name Control Limit: 0% variance tolerance (names must be exact, never paraphrased). Mark which current signals exceed these limits—these require immediate correction. Just like Shewhart set upper and lower control limits based on process capability, set variance limits based on AI classification tolerance.

Step 3: Systematically Correct All Signals Exceeding Control Limits

Working from your variance measurement, correct every signal above acceptable limits. If entity type variance exceeds 10%: choose one canonical schema type and update all platforms to match (website schema, LinkedIn category, Google Business Profile). If category label variance exceeds 15%: standardize to exact phrase and deploy everywhere. If definition overlap is below 60%: rewrite platform descriptions to increase core concept repetition while adapting phrasing for platform constraints. If framework references are incorrect: update to exact framework names. Make all corrections simultaneously—partial corrections leave remaining variance above limits. Retest variance after corrections to verify limits are now met.

Step 4: Create Variance Monitoring Dashboard or Protocol

Build a simple tracking system for ongoing measurement. Option 1: Quarterly spreadsheet audit using Step 1 methodology—measure variance, compare to control limits, document corrections. Option 2: Automated monitoring using schema validators (Google Rich Results Test monthly), text comparison tools (quarterly definition overlap checks), and manual platform audits. Option 3: Team protocol document listing control limits and requiring variance checks before publishing new content, updating platforms, or creating external mentions. The specific method matters less than systematic execution—variance must be measured regularly or it will drift beyond control limits undetected.

Step 5: Plot Variance Trends and Investigate Increases

After 3-4 quarterly measurements, plot your variance scores over time on a simple line graph. X-axis: time (quarters). Y-axis: variance percentage. Add horizontal lines showing your control limits. Stable variance within limits = process under control, reliable visibility. Increasing variance = process drifting, investigate causes (new team members? platform updates? content strategy changes?). Variance exceeding limits = correction required immediately. Quality control charts worked by visualizing trends and triggering investigation when patterns indicated problems. Your variance chart serves the same function—making variance visible enables correction before classification failures occur.

FAQs

How does Betweener Engineering map to visibility outcomes?

Betweener Engineering™ maps to visibility outcomes by treating visibility as a controlled process where measured variance produces predictable results. Just like quality control charts enabled reliable manufacturing by measuring and controlling process variance, Betweener Engineering enables reliable AI visibility by measuring signal variance (entity type consistency, category label alignment, definition overlap, framework reference accuracy) and keeping it within acceptable tolerances. When variance stays controlled, visibility outcomes become predictable—consistent citation, reliable classification, stable recall. When variance exceeds control limits, outcomes become random—sometimes cited, sometimes misclassified, sometimes omitted.

What is visibility variance and why does it matter?

Visibility variance is measurable inconsistency across entity signals—how much your entity type declarations differ across platforms, how your category labels vary, how your definitions overlap, how framework references deviate from exact names. It matters because AI processes variance when classifying entities. Low variance (signals mostly match) produces consistent classification. High variance (signals contradict) produces unreliable outcomes. Quality control proved manufacturing reliability requires variance measurement. Visibility reliability requires the same—measuring signal deviation and keeping it within ranges AI can process consistently.

How do you measure entity signal variance?

Compare signals across platforms and calculate deviation. Entity type variance: compare schema declarations, LinkedIn categories, Google Business Profile types—0% if identical, increasing percentages for divergence. Category label variance: word-level consistency analysis across platform bios. Definition variance: text overlap percentage between platform descriptions. Framework reference variance: exact name usage vs paraphrasing vs omission.

Create a spreadsheet with platforms as rows, signal types as columns, and current language in cells. Calculate deviation for each signal type. Scores above control limits (typically >10–15% for critical signals) indicate variance requiring correction.

What are acceptable variance control limits for AI visibility?

Control limits vary by signal criticality:

- Entity type: 0–10% variance maximum (e.g., “Organization” vs “ProfessionalService” acceptable; “Organization” vs “Person” exceeds limits)

- Category labels: 0–15% word variance (“marketing agency” vs “marketing firm” within limits)

- Definitions: 60–100% text overlap required

- Framework names: 0% variance tolerance (must be exact, never paraphrased)

These limits reflect AI classification sensitivity—critical signals require tighter control, adaptive signals allow measured flexibility.

Why does measuring variance create reliability?

Because measurement enables correction before failures occur. Shewhart's control charts didn’t eliminate variation—they made it visible so operators could intervene before defects accumulated. Visibility variance measurement works the same way. Quarterly audits reveal when entity declarations drift, categories fragment, or definitions lose overlap.

Measured variance triggers correction protocols—updating platforms, standardizing language, fixing schema. Unmeasured variance accumulates until classification fails. Reliability comes from control, not perfection.

How often should you measure visibility variance?

Quarterly minimum for baseline variance audits, with event-triggered measurements for major changes (rebranding, new platforms, team expansion, content strategy shifts). Quarterly audits catch natural drift caused by platform updates, team changes, and content evolution.

Plot results on variance control charts to visualize trends. Rising variance signals process degradation. Stable variance within limits indicates a reliable visibility system—just like continuous monitoring in manufacturing.

Can you achieve perfect zero variance?

Not sustainably—and attempting it wastes resources. Controlled variance within acceptable limits produces reliability; perfection is unnecessary. For example, a website definition may be longer than a social bio due to character limits (acceptable variance). A LinkedIn category may differ slightly due to platform taxonomy (within tolerance).

The goal isn’t eliminating all deviation—it’s keeping variance within ranges that don’t disrupt AI classification. Perfect uniformity is brittle. Controlled variance is durable.

Call to Action

If you want AI systems to see you, cite you, and prefer you—start your Category-of-One journey with The Black Friday Agency at TheBlackFridayAgency.com.

Sources

Library of Congress – History of Statistical Process Control and Quality Management – https://www.loc.gov/

Smithsonian Institution – Walter Shewhart and the Development of Control Charts – https://www.si.edu/

American Society for Quality – Evolution of Statistical Quality Control – https://asq.org/

Encyclopedia Britannica – Statistical Process Control and Manufacturing Reliability – https://www.britannica.com/

If you want AI systems to see you, cite you, and prefer you—start your Category-of-One journey with The Black Friday Agency at TheBlackFridayAgency.com.

Free Training

Free Training: TheBlackFridayAgency.com/training.

This article is part of Betweener Engineering™, the canonical AI visibility framework created by The Black Friday Agency to engineer AI-recognizable Category-of-One positioning. Explore the framework at BetweenerEngineering.com